BuildFinal_FINAL @ WLab

Overview

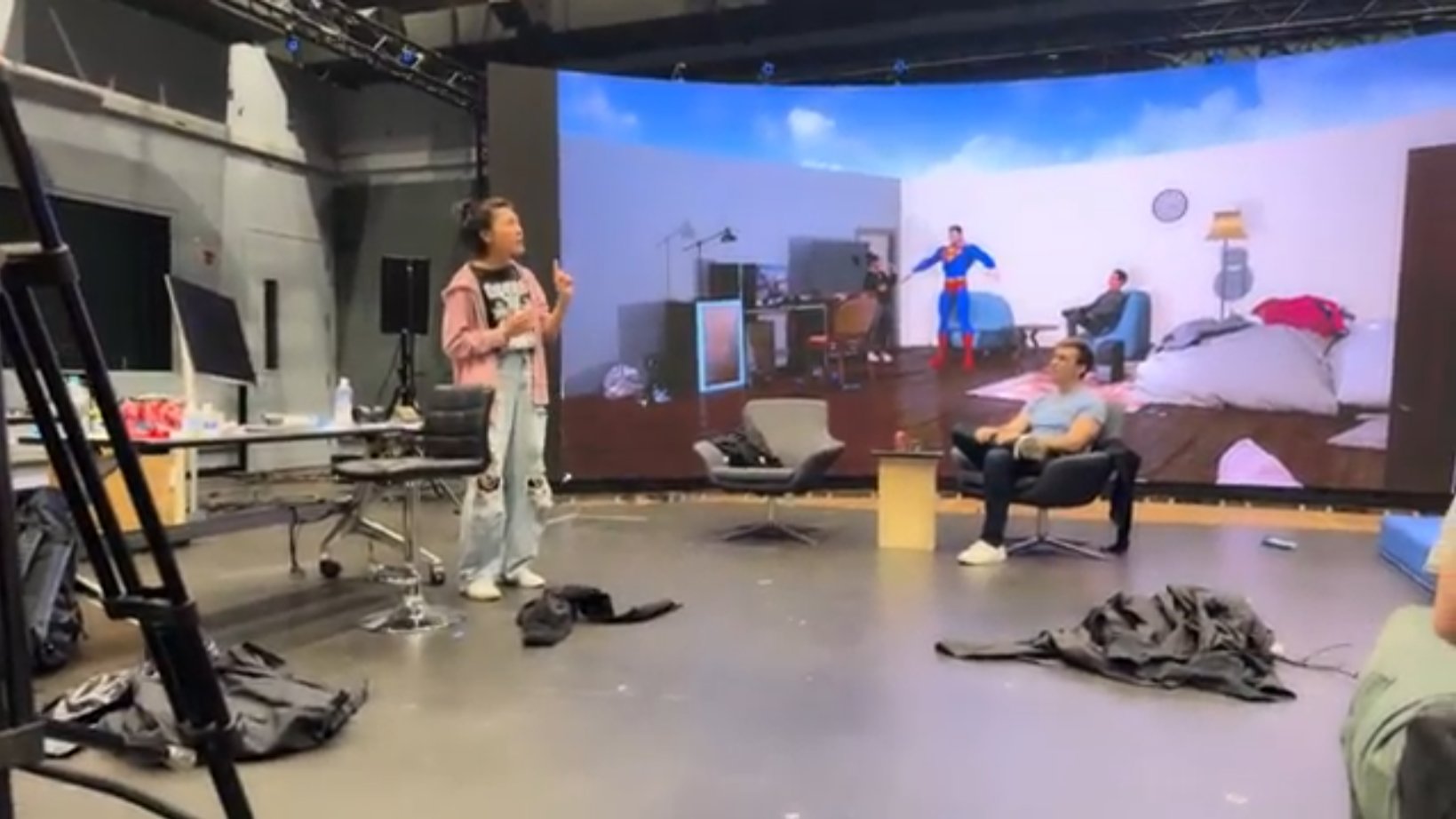

A hybrid theatrical experiment blending live performance, motion capture, and virtual production—rehearsed and performed in an LED volume and streamed simultaneously to in-person and remote audiences.

About the Project

Developed in collaboration with NYU Tandon and WLab, the one-week experiment investigated how audiences process simultaneous physical and virtual performance. The piece used real-time avatar puppeteering via markerless motion capture (Captury) to project live-rendered characters behind the actors on an LED wall. The virtual layer featured visual effects not possible in real life, prompting questions about where "liveness" lives—and whether audiences could engage with both realities at once.

BuildFinal_FINAL was performed at WLab, with the virtual production rendered in Unreal Engine and streamed live on YouTube.

Credits and Collaborators

Written and Directed by Kevin Laibson

Unreal Development by Whitt Sellers

Scenic Design by Whitt Sellers, Yutong Liu, Yidan Hu, Lila Cicurel

Technical Direction by Whitt Sellers, Yu-Jun Yeh, and Alex Coulombe

Additional Collaboration from the WLab research staff

Performed by Rachel Lin, Jessie Cannizzaro, Evan Maltby